Explore Tech Topics That Matter

From cutting-edge hardware to gaming trends, discover comprehensive coverage across all digital frontiers

hardware

Components, PCs and peripherals

View articles →High tech

Innovations and new technologies

View articles →Internet

Web, social media and online services

View articles →marketing

Digital marketing and SEO

View articles →News

Tech and computing news

View articles →smartphones

Phones, tablets and apps

View articles →Video games

Video games, consoles and gaming

View articles →

Tech Intelligence, Simplified

We break down complex technology into accessible insights that help you understand the digital landscape. Whether you're a tech enthusiast, professional, or curious learner, our content keeps you ahead of the curve.

- Daily updates on industry developments

- Comprehensive hardware reviews and comparisons

- Deep dives into emerging technologies

- Gaming industry analysis and trends

Latest articles

Our recent publications

Comprehensive blueprint for crafting a high-performance media server using nvidia shield tv and external storage options

The NVIDIA Shield TV is renowned as a versatile streaming device, capable of doubling up as a formidable media server. E...

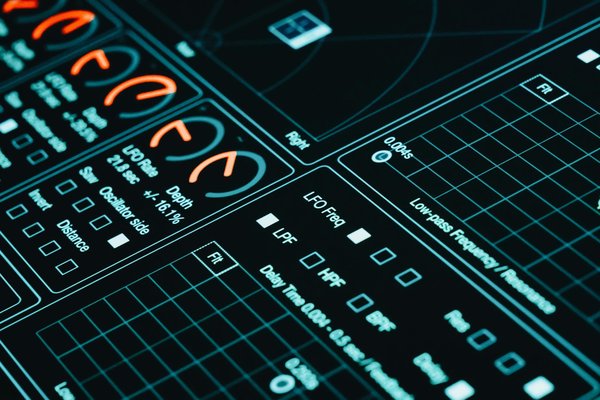

Mastering music production: the definitive blueprint for crafting your custom pc for fl studio

Before diving into building a custom PC for music production, it is crucial to comprehend the core system needs, especia...

The definitive handbook for building a lightning-fast home network using ubiquiti unifi switch 24 poe and access points

The Ubiquiti UniFi Switch 24 PoE is an essential tool for anyone looking to enhance their home network optimization thro...

Building a Bulletproof Cybersecurity Framework for Fintech: Essential Steps for Robust Protection

In an era where digital threats proliferate, establishing a robust Cybersecurity Framework in the fintech industry is im...

Fast audio to text conversion with transcri

In an era where efficiency is crucial, the ability to swiftly convert spoken words into written form has become invaluab...

Key considerations for building an effective ai-driven content recommendation system

In today's digital age, AI content recommendation systems are pivotal in enhancing user engagement and retention. These ...

Revolutionizing healthcare diagnostics: cutting-edge approaches to real-time ai integration

Artificial intelligence has revolutionized healthcare diagnostics, introducing unprecedented precision and efficiency. C...

Unlocking sustainability with plm software in product development

PLM software transforms product development by centralizing data and streamlining collaboration across teams, reducing w...

Achieving unmatched uptime: an ultimate guide to configuring postgresql with read replicas

PostgreSQL is a robust, open-source database management system used for a variety of applications, such as web services ...

Comprehensive handbook: establishing a robust vpn with cisco anyconnect for your enterprise network security

Cisco AnyConnect is a versatile VPN solution that offers significant enterprise security benefits. This robust software ...

Link obfuscation in webflow: boost seo and protect links

Link obfuscation in Webflow is a smart technique for anyone looking to boost SEO while protecting valuable links. By hid...

Unlocking kubernetes rbac: your ultimate step-by-step manual for fine-tuned access control

Before diving into the intricacies of Kubernetes Role-Based Access Control (RBAC), it's essential to grasp the fundament...

A/b testing unveiled : transform your marketing strategies

What if you could increase your conversion rates by 49% on average with a simple methodology? According to VWO's 2024 op...

Boosting brand visibility and interaction: harnessing the power of virtual events

Virtual events have become crucial for enhancing brand visibility in today's digital landscape. These events leverage te...

How does mobile optimization fuel conversion rate growth in e-commerce stores?

In today's digital landscape, mobile optimization plays a crucial role in the success of an e-commerce platform. With a ...

Top strategies for boosting website loading speed and enhancing user experience

A fast website performance is crucial for retaining visitors, enhancing user satisfaction, and improving the SEO impact....

Transform your imagination using ai character design tools

AI character design tools revolutionise creativity by turning your ideas into vivid digital personas effortlessly. These...

Unlocking creativity with ai character creation tools

AI character creation tools transform imagination into detailed, dynamic personas faster than traditional methods. They ...

Elevate your business with effective maintenance management software

Effective maintenance management software can transform how your business operates. By streamlining processes, reducing ...

Overcoming hurdles and discovering solutions for devops implementation in large enterprises

In the transition to DevOps, organizations often face numerous challenges, especially in large enterprises. A significan...

Unleashing augmented reality: transforming corporate training and development

In the ever-evolving landscape of corporate training, technology has become the catalyst for innovation and efficiency. ...

Unlocking speed: how low-code platforms enhance software development efficiency

In the fast-paced world of technology, the ability to develop software quickly and efficiently is crucial for businesses...

Infinite Landscapes: The Impact of Procedural Generation on Survival Game Environments

Procedural generation is a game design technique where content is created algorithmically rather than manually. This met...

Innovative Tactics for Smoothly Incorporating Voice Recognition into Narrative Video Games

Introducing voice recognition technology in narrative video game development is revolutionising player interactivity. Th...

Mastering the Maze: Key Obstacles in Crafting Seamless Cross-Platform Multiplayer Games"

Cross-Platform Development has revolutionised the creation of multiplayer games, allowing gamers to connect and play wit...

Never Miss a Tech Beat

Get the latest analyses, guides, and breaking news delivered straight to your inbox. Join thousands of readers who stay informed about the technologies shaping our future.

Rejoindre →Frequently Asked Questions

How often is new content published?

We publish fresh articles daily across our various categories including hardware, smartphones, gaming, and digital marketing. Our editorial calendar ensures consistent coverage of both breaking news and evergreen topics that matter to tech enthusiasts.

Who writes the articles on Datacraftex?

Our content is created by a diverse team of technology journalists, industry professionals, and subject matter experts with years of experience in their respective fields. Each contributor brings unique insights and hands-on expertise to ensure accurate, valuable information for our readers.

Can I suggest topics for future articles?

Absolutely! We value reader input and encourage topic suggestions. You can reach out through our contact form or social media channels. While we can't cover every request, we regularly review suggestions and incorporate them into our editorial planning.

Is the content suitable for beginners?

Yes! While we cover advanced topics, our writers aim to make complex technology accessible to all knowledge levels. We include clear explanations, avoid unnecessary jargon, and provide context that helps both newcomers and experienced tech enthusiasts gain value from our articles.